Reputation system

The quality of services transacted on the Openfabric platform is subject to the quality of the ratings collected from endusers. The fundamental challenge is that a user can provide ratings that are not truthful to the actual experience that they had with the AI . When users provide ratings outside of the control of the relying party, it is difficult to know a priori when a user has submitted a dishonest evaluation. However, it is often the case that unfair evaluations diverge in their statistical patterns from those of the accurate and honest reviews [94]. Openfabric utilizes a Bayesian rating system [95] based on an analytical filtering technique, ensuring the exclusion of unfair ratings [96] [97]. The reputation score represents an indicator of how a particular AI, infrastructure, or dataset will behave in the future. Mathematically, the beta probability density function (PDF) can be defined using the gamma function Γ as:

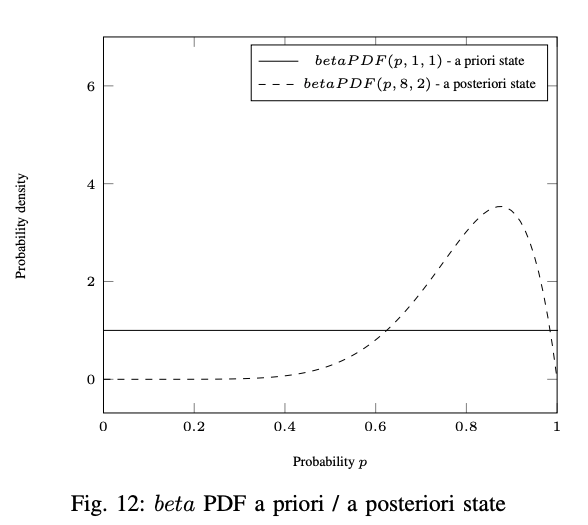

where and represent the amount of positive and negative ratings. As depicted in Fig. 12, when nothing is known, the beta PDF has a uniform distribution where and .

The distribution readjusts after observing positive and negative evaluations. For example, the beta PDF after observing 7 positive and 1 negative ( and ) outcomes is illustrated in Fig. 12. represents the probability expectation of the function defined as:

The rating system is composed of vectors where and . The aggregated rating of service at time performed by reviewers from the community is defined as:

Taking into account that users may change their behavior over time, it might be advisable to favor more recent ratings over the ones that were cast further in the past. This can be achieved by including a survival factor controlling the speed at which old ratings are "forgotten". The definition updates to:

where and is the current time. The reputation score of the agent at time is defined as follows:

The pseudocode of the rating function is presented below:

$C$ is the set of all evaluators

$F$ is the set of all assumed fair raters

$Z$ is the evaluated agent

$F = C$

WHILE F changes do

$\rho^t(Z)=\sum_{X\in F}\rho^{t}(X)$

$R^t(Z)= E(\rho^t(Z))$

FOR rate R in F do

$f=$ beta$(\rho^t(R,Z))$

$l=$ q quantile of f

$u=$ 1-q quantile of f

IF $l<$ $R^t(Z)$ or $u<R^t(Z)$ then

F $= \setminus {R} $

ENDIF

ENDFOR

ENDWHILE

return $R^t(Z)$

The flexibility and robustness of this algorithm is ensured by variable distributions, rather than by a fixed threshold. If the spread of ratings from all reviewers is wide, then it will tend not to reject individual evaluators. If a rating vector is frequent among reviewers (e.g. 85% positive, 15% negative ratings) except for one reviewer ( e.g. 50% positive, 50% negative ratings), then the exceptional rating will be rejected. The algorithm’s sensitivity can be increased or decreased by modifying the parameter.